March 2004 (#100):

or read TWDT

The Mailbag

HELP WANTED : Article Ideas

Submit comments about articles, or articles themselves (after reading our guidelines) to The Editors of Linux Gazette, and technical answers and tips about Linux to The Answer Gang.

Good incentive for publishing your personal accomplishments

Good incentive for publishing your personal accomplishments

Fri, 6 Feb 2004 16:48:54 -0600

Richard Bray (

linx from brayra.com)

Just thought the TAG group would appreciate this story.

I wrote a small script to handle scanning of all my financial documents into

my computer. It's real simple and easy to use. Well, I have moved since I

used it, about a year ago. So, now with tax time coming, I decided it was

time to start scannnig in all my financial papers and shredding the files I

do not need to keep. Plus, I can burn them to cd and have a backup for the

safety deposit box.

Well...

I did real well, when I moved last year. I didn't lose one thing. I really

mean it. I've been in the house and I haven't missplaced anything. Until last

weekend. I couldn't find that #@#%#! script anywhere. I searched all my

servers, and backup CDs. I was pretty ticked and about half way through

rewriting it, when I remembered something.

I wrote an email talking about it to my local LUG a couple years ago. After

digging in my mail for a while I found a reference that said, and I quote,

"If anyone is interested in the script scan_docs.sh, you can find it on my web

site http:///www.brayra.com/downloads/scan_docs.sh"

I am an idiot.

Expect some articles from me. I have many other little programs I've written

over the years. I need to write up nice documentation for them all and post

them for the gazette. I would hate to lose them too.

[Rick]

Richard, you might perhaps appreciate a quotation from the Penguinus Maximus:

"Real men don't use backups, they post their stuff on a public ftp server

and let the rest of the world make copies." - Linus Torvalds

Immortalize those cool scripts of yours too, send them in to Linux

Gazette as either articles or nice little tips.

-- Heather

GENERAL MAIL

typo? 0wnz0red? prankster on staff?

typo? 0wnz0red? prankster on staff?

Mon, 9 Feb 2004 16:36:40 -0800

Carla Schroder (

carla from bratgrrl.com)

Reading Linux Gazette is like a treasure hunt, you never know what you'll

find. Do you mean for this to be published?

http://linuxgazette.net/issue99/lg_mail.html

Did you get cracked by a polite cracker?

A prankster on your staff?

===== Check out KNOPPIX Debian/Linux 700MB Live CD: =====

http://www.knopper.net/knoppix/index-old-en.html "C00K13 M0N573R 0WNZ J00!!

PH34R C00K13 M0N573R 4ND 0SC4R 4ND 3LM0 4ND 5NUFFL3UP46U5 4ND 7H3 31337

535AM3 57R337 CR3W!!" .dotgoeshere.

cheers,

Carla

I almost took that entry out because it seemed the first part had been

lost, but I assumed Thomas or Heather had wanted it in so I left it.

I don't remember when I wrote the original or whether I cc'd it to TAG.

-- Mike

Well, it went right over my head. Sorry! It is a funny sig, I may have to

emulate it in some fashion, like OMG WTF ROFFLE lol HAHAHAA or somesuch.

Oh, *that!* It was just an extremely cute .sig that was in the email (I

immediately stole a copy for my quote file.

-- Ben

-- Ben

The original sender really had that in his sig. Thomas and I normally

strip signature blocks, but especially amusing ones that

make Linux just a little more fun - sometimes I let 'em live :D

-- Heather

[Rick]

I'm pretty sure she's referring to the tongue-in-cheek 31337-speak down

at the bottom of Dave Bechtel's letter. Unless the Cookie Monster,

Oscar, Elmo, Snuffleupagus, and the Elite Sesame Street Crew have

suddenly turned malevolent, nobody has much to fear (or is that PH33R?).

Oh, cuteness in .sigs is a well-established meme. We have nothing to

PH33R but PH33R itself.

Welcome to the influence of the bunnies! With ky00t, we will

rule the world!

-- Ben

[LG 98] 2c Tips: #4

[LG 98] 2c Tips: #4

Sat, 31 Jan 2004 05:59:02 +0000 (GMT)

Thomas Adam (

The LG Weekend Mechanic)

Question by Robin Chhetri (robinchhetri from fastmail.fm)

Robin Chhetri wrote:

Thanks a lot for your answer.I will add here that I send this questions

months ago(I don't remember the exact date) and I had figured out the

solution to the problem too a long time back.

That's OK. The particular tip was interesting, so we published it

nonetheless.

That's OK. The particular tip was interesting, so we published it

nonetheless.

But I am indebted at least for your reply.

Not at all. You're quite welcome.

If you don't mind could you tell me the link in which this question was

published.

Robin

It can be found here:

http://linuxgazette.net/issue98/lg_tips.html#tips.4

We also have a small laundry note about this tip:

-- Heather

[Faber] I must be missing something. If you simply want to print to

STDOUT, try this:

$( whereis libcrypto | awk '{print $3}' )

which will print to STDOUT. If you simply must put it into a variable,

then:

$robin=$(whereis libcrypto | awk '{print $3}') ; echo $robin

^^^^^^^

That is in error, since in bash prefixing the variable name with a $

sign is returning/using the value given. It should read:

robin=$(whereis libcrypto | awk '{print $3}') ; echo $robin

Whether Faber meant the initial '$' as the prompt or not... is

still ambiguous

-- Thomas Adam

GAZETTE MATTERS

Search page at LG now operational

Search page at LG now operational

Tue, Mar 02, 2004 at 09:23:41AM -0500

Ben Okopnik (

LG Technical Editor)

Please take a look at it, folks. Any additions, subtractions,

corrections, anything I've missed - let me know.

Feeling Better.

Feeling Better.

Tue, Mar 01, 2004 at 14:08:41 -0800

Heather Stern (

The Answer Gang's Editor Gal)

I'm pleased to say that Thomas Adam, our Weekend Mechanic, is feeling

well again, and we hope you enjoy the article he has in this month's

issue. Warm thanks to everyone who thought kindly of him while he was

recovering.

This page edited and maintained by the Editors of Linux Gazette

HTML script maintained by Heather Stern of Starshine Technical Services, http://www.starshine.org/

Published in Issue 100 of Linux Gazette, March 2004

More 2 Cent Tips!

See also: The Answer Gang's

Knowledge Base

and the LG

Search Engine

Backporting packages.

Backporting packages.

Sun, 22 Feb 2004 02:09:05 +0000 (GMT)

Thomas Adam (

The LG Weekend Mechanic)

Some of you will have expressed questions like: "When will XYZ be in

stable" or "is there a backport for such and such". You can in fact

backport packages yourself. For such cases, the following procedure works:

(note: I maintain a number of backported debs, and this routine works)...

1. Add a deb-src line for sid to your sources.list. Typically:

deb-src http://www.mirror.ac.uk/sites/ftp.debian.org/debian/ unstable main

2. Run:

apt-get update

3.

apt-get build-dep <package> && apt-get -b source <package>

(where: <package> refers to the package name in question). What this will

do is install the build dependencies for the given package, and then will

build the package.

4. All that is left then is to do:

dpkg -i ./deb_files.deb

Pushing files to multiple hosts

Pushing files to multiple hosts

Mon, 2 Feb 2004 10:20:58 -0500

Ben Okopnik (

LG Technical Editor)

When I teach a class, I often need to push one or more files to my

students' systems. Previously, I would write a "for-do-done" loop and

use "scp" to get the files across, laboriously logging in and exiting

out of each system every time I wanted to do a transfer - painfully

clunky.

Then I did some searching on the Net and found "sshtool" by "noconflic".

Written in Expect, it allows multiple host logins and copying. However,

it did not have a "no password" mode (i.e., logging in when

".ssh/authorized_keys" contains your key) and read the list of hosts

from a list defined within the program. I've modified it to read an

external file called "pushlist" and added a "no password" mode; this

last, of course, requires that you first push a "~/.ssh/authorized_keys"

to the host list.

See attached sshtool.expect.txt

First, create your "pushlist", possibly from an "/etc/hosts" on one of

the local machines. It should contain all your target hosts, one line

per host. Next, create your ".ssh/authorized_keys" in the directory

where you keep "sshtool" by copying your public keys into it:

ben@Fenrir:~/sshtool$ mkdir .ssh; cat ~/ssh/*pub > .ssh/authorized_keys

Then, push it out to your hosts (NOTE: this replaces the remote hosts'

"authorized_keys" files!):

# Log in as user "student" and send the local file

ben@Fenrir:~/sshtool$ ./sshtool -c .ssh/authorized_keys student

After this, I can upload any file or list of files to the entire pushlist simply by typing

ben@Fenrir:~/sshtool$ ./sshtool -C <file[s]> student

I can also execute a command on all the systems via the "-U" option.

Note: I'm not an Expect programmer; otherwise, "sshtool" would accept a

"local:remote" syntax so files wouldn't need to be in identical

locations. It would also allow you to specify per-host usernames in the

push list (not an option I need, but something to make it more

flexible.) Anyone adding these features - please send me a copy.

compression speed

compression speed

Tue, 3 Feb 2004 10:48:08 -0500

Ben Okopnik (

LG Technical Editor)

Based on something I saw on the "swsusp" list, I've done a bit of

experimentation with "lzf" compression. It's not any more effective,

size-wise, than some of the common compression utilities - in fact, it's

less so in many cases. What it is, however, is fast.

Results for compressing my 45MB "Sent_mail" box:

rar 0m46.314s

bzip2 0m29.840s

arj 0m7.396s

zip 0m7.008s

gzip 0m6.756s

compress 0m3.094s

lzf 0m0.997s

File sizes:

47668763 Sent_mail

35446476 Sent_mail.lzf

32227703 Sent_mail.Z

25119004 Sent_mail.arj

24836842 Sent_mail.zip

24836720 Sent_mail.gz

23355061 Sent_mail.bz2

22877972 Sent_mail.rar

For applications where speed matters more than size, "lzf" is clearly a

win. For size where speed is not an issue, it's "rar" (which matches the

results of my previous, much broader testing with many file types and

scenarios.)

2.6.2 kernel woes: Will not find root fs

2.6.2 kernel woes: Will not find root fs

Thu, 5 Feb 2004 16:14:18 -0500

dann (

dann from thelinuxlink.net)

I have been compiling and recompiling the 2.6.1 and 2.6.2 kernels the past

three days trying to find a configuration that will work for me. I have

performed many kernel compiles in the past and never had this problem

occur on my machine which is currently running 2.4.24.

This is the error I get when I boot into the 2.6.1 or 2 kernel:

VFS: Cannot open root device "302" or hda2

Please append a correct "root=" boot option

Kernel Panic: VFS: Unable to mount root fs on hda2

[Thomas]

Believe it or not I had this and it was related to a ramdisk issue. Try

adding:

append="ramdisk_size=5120"

to /etc/lilo.conf

and then:

/sbin/lilo -v

Reboot and pray.

Now I have done some searching around google and saw that other people

have had this problem. I have implemented a number of suggestions they

were given but nothing has been fruitful. This is what I have tried:

Verified the following are compiled in (which they are):

CONFIG_IDE=y

CONFIG_BLK_DEV_IDE=y

CONFIG_BLK_DEV_IDE=y

CONFIG_BLK_DEV_IDEDISK=y (I have tried both IDE and IDEDISK separately

also)

CONFIG_EXT2_FS=y

CONFIG_EXT3_FS=y

I have removed support for Advanced Partitions.

Should have no effect -- I support advanced partitions all the time in

my kernels so I can mount other-OS drives in my lab station.

-- Heather

I have toggled between DEVFS support (initially I said no, but enabling

does not seem to make a difference anyway).

By the time devfs really causes pain you're in userland already - you

didn't get that far. Didn't I hear a rumor they're deprecating it?

-- Heather

I verified my settings in /etc/lilo.conf were correct. I even tried

passing the root=/dev/hda2 parameter to the kernel at boot.

Nothing has worked.

I have tried to see if there are any error messages during the boot but

where I would suspect there being an error message, it scrolls by way too

fast. Nothing gets logged at this point either.

As I said, I have been running 2.4.24 for a bit now having patched that

from 2.4.9 along the way. My distro is slackware-current which reports to

have support for the 2.6.x series kernels.

Any further suggestions would be much obliged.

Thanks for your time.

[dann]

I fell pray to the post to TAG curse again, which usually has me finding

the answer within a few hours of emailing TAG.

I had replaced a failing drive about 6 months back with a used drive I

picked up along the way. This drive had EZ-Bios installed in the boot

sector. Initially I was concerned with this but when I had no problems

with running linux after I transferred over my partitions, I put it out of

mind a bit too far.

I compiled a 2.6.2 kernel enabling everything possible under the IDE

device drivers into the kernel. This slowed down the boot process enough

for me to see this line:

/dev/ide/host0/bus0/target0/lun0 p1[EZD]

Sure enough, I knew EZBios was going to come back and bite me one day. I

guess EZBios was somehow preventing the kernel from seeing the drive

properly.

After removing EZBios the 2.6.2 kernel booted without a complaint.

Thanks for the suggestions, I appreciate your time and effort.

[Ben]

Surely that would be "The TAG blessing" rather than "curse", Dann?

All

you do is write to TAG and shortly thereafter get your answer. What

could be better?

All

you do is write to TAG and shortly thereafter get your answer. What

could be better?

That is true. Perhaps I should take advantage of that blessing more often

and post sooner. Maybe the luck will work the other way. Instead of

three days of trial and error, post on day one and the answer will appear.

[Ben]

(Yes, we managed to enlist the Universe and The Gods of Fate and Time in

helping us. We thought the negotiations would be tough, but, you know,

Gods are intelligent beings and therefore use Linux. It was a shoe-in.)

Well heap some more offerings on the pyre. I'm going in for another round

of video capture and editing soon!

Live Linux CDs

Live Linux CDs

Wed, 18 Feb 2004 19:45:58 +0000 (GMT)

Thomas Adam, Raj Shekhar, Ben Okopnik (

The LG Answer Gang)

Hi all,

Someone on my LUG found a really useful site[1] that has a list of all the

Live Linux CDs that are available. Not just Knoppix you know!

-- Thomas Adam

[1] http://www.frozentech.com/content/livecd.php

[Raj]

A lot of effort is going to create the regional language flavor of

Linux. Linux + Live CDs has provided a fertile ground for

internalization of software and demoing the capabilities of Linux to the

people.

For example, one of my friends demoed a Bengali version of Knoppix

(Ankur Bangla Linux) in the LinuxAsia 2004 held in Delhi, India. It was

a great hit. People watched open mouthed as he typed away happily on

gedit to produce a small Bengali poem.

[Ben]

Oh, excellent! This is sorta the "dark area" of computers - generally

solved by "simply" learning English. Not that I mind the world moving

toward a common language, but the exclusion field and the entry

requirements are keeping the computer culture very small compared to

what it could be.

I'm really looking forward to the day when someone invents an input

method that is multilingual, portable, and at least as fast as a

keyboard (they'll be billionaires overnight.) I've heard of various

"fist keyboards" like the Twiddler and OrbiTouch, but... we're not quite

there yet.

script for finding ssh-agent at login

script for finding ssh-agent at login

Mon, 2 Feb 2004 14:02:38 +0100

Karl-Heinz Herrmann, Ramon van Alteren (

The LG Answer Gang)

Hi,

I ran into an annoying problem with ssh-agents. If you don't start one

on the very first login screen from which you start X you can't access

the agent from any xterm started from the window-manager. Starting new

ones is no good idea if one is already running. This script will look

for a running ssh-agent and set the environment variables so it can be

contacted. If none is running it will start one. As on ssh-login with

enabled "AGENT Forwarding" the environment variables will be set

and the remote ssh-agent (where you are connecting from) one will be

used.

See attached sshssearch-old.bash.txt

unfortunately there has been a change in "interface" of "ssh-add -l" --

before it was giving exit code 0 for "agent is there, with or without

keys" and 1 for "no agent".

Now it's finegrained to: 0 for "agent with keys" , 1 for "agent without

keys" and 2 for "no agent".

See attached sshssearch-new.bash.txt

of course you have to "source" the script to set the local environment

variables:

source sshsearch.sh

or

. sshsearch.sh

to make it automatic call it from .profile (or .bashrc).

Ben (or whoever feels its to clunky): feel free to make it into a

one-liner

K.-H.

[Ramon]

I'm familiar with the problem and found a small tool to deal with it.

It was written by Daniel Robbins.

Here's the relevant part of the manpage:

|

...............

NAME

keychain - a program designed to keep ssh-agent processes alive across

multiple logins.

DESCRIPTION

Keychain is an OpenSSH key manager, typically run from ~/.bash_profile.

When run, it will make sure ssh-agent is running; if not, it will start

ssh-agent. It will redirect ssh-agent's output to

~/.keychain/[hostname]-sh, so that cron jobs that need to use ssh-

agent keys can simply source this file and make the necessary passwordless ssh

connections. In addition, when keychain runs, it will check with ssh-agent

and make sure that the ssh RSA/DSA keys that you specified on the keychain

command line have actually been added to ssh-agent. If not, you are prompted

for the appropriate passphrases so that they can be added by keychain.

...............

|

Although it creates a security risk, (don't leave any consoles open

unattended, all your keys are cached) I've found it extremely pleasant to

work with.

-

Here's the link:

- http://www.gentoo.org/proj/en/keychain.xml

Can't beat homegrown scripts though

It's too much fun to make 'm.

It's too much fun to make 'm.

Hope it's useful

Xine problem

Xine problem

Fri, 06 Feb 2004 11:33:02 +0530

Aditya Godbole (

aditya_godbole from infy.com)

I am using RH8 linux and successfully installed xine for video play.

Video cds(.dat format) are functioning well with xine. But I cannot

play the video files (in .dat format) copied to hard disk.

Hi,

Rename the files from .dat to .mpg or .mpeg. Works for me.

Regards,

Aditya Godbole.

Even the laws of nature cannot produce the right results unless

the initial conditions are entered correctly.

Prof. Yash Pal

(Techfest 2004)

Floppies on CD - the ultimate collection

Floppies on CD - the ultimate collection

09 Feb 2004 20:20:38 -0500

Suramya Tomar (

suramya from suramya.com)

Hi,

This is a cool tip. For people who are too lazy to do all the work

(like me) they can download a program called the Ultimate Boot CD which

allows you to run floppy-based diagnostic tools from CDROM drives.

For information on the CD and the tools included with it visit:

http://www.ultimatebootcd.com. You can download it from the above site

or from my mirror at: http://mirror.suramya.com.

The site also has instructions on how to customize the CD for your

specific needs.

Hope you all find it as useful as I do.

- Suramya

Local Eth/Internet PPP can work together

Local Eth/Internet PPP can work together

Sat, 21 Feb 2004 20:57:17 -0500

Jack Sprat (

trashcan from chilitech.com)

On RedHat/Fedora, if only the subnet your computer is part of needs to

be accessed over the LAN card, I believe this simple trick will work. If

not, it is easy to undo.

On set up of the network, simply do not enter an IP for the gateway. If

this is already configured then shut down your network

(/etc/rc.d/init/.d/network stop) and remove the "GATEWAY" line from

/etc/sysconfig/network-scripts/ifcfg-eth0. Restart your network and the

"route" command should show no default gateway, but also a route via

eth0 to the subnet your computer is on. Something like :

192.168.0.0 * 255.255.255.0 U 0 0 0 eth0

kppp should then happily create a default route to ppp0 when executed.

Ron H.

No need to shut it down, just do:

route del default gw <IP_ADDR>

You'll need to be root to do it.

-- Thomas Adam

Sometimes it's not the website

Sometimes it's not the website

Fri, 5 Dec 2003 10:31:03 -0800

Mike Orr (

Linux Gazette Editor)

Question by Raj Shekhar (rajshekhar3007 from yahoo.co.in)

I'm still not seeing it there. The entries are alphabetical and go

from "Firebird Modern" directly to "Lush", and I can't find

"LittleFirebird" on the page anywhere.

after some poking around...

-- Heather

This is really strange. I checked again and I can see LittleFirebird

theme. I asked other people to check it and they could not find it

either.

No idea why this is happening. I am on broadband connection. My ISP

(Sify broadband) has put a LAN in the neighbourhood and we connect

through a proxy server. Do you think this could be an issue with the

cache ? (The other people I asked to check were not part of the ISP's

LAN)

[Mike]

Either your ISP is not updating the page properly, or your browser

isn't. I assume you've done shift-reload, restarted the browser, or

tried a different browser. Sometimes the browser cache can be subtle

and stubborn, although I've had less problems with that since I stopped

using Netscape 4. If your ISP has a malfunctioning proxy server, I

guess there's nothing you can do except tell them to fix it.

Securing a dial in?

Securing a dial in?

Fri, 13 Jun 2003 10:45:13 -0400

John Karns (

The LG Answer Gang)

Question by George Morgan (George_Morgan from sra.com)

Hello answer guy,

I need to be able to secure an external modem that has been connect to a

Solaris box to protect against unauthorized calls.. What I mean is that I

want to be able to allow people to connect to the box based purely on the

phone number they are calling from. Is there a way on the modem to only

allow certain calls to go through while rejecting all other calls?

Thanks,

George

[John Karns]

See the "mgetty" open source pkg (http://www.google.com/linux for it).

It offers this capability, provided that your modem line has caller id.

The pkg includes pretty good documentation as well as good example cfg

files.

Full r/w to NTFS from Linux

Full r/w to NTFS from Linux

Sat, 06 Dec 2003 00:19:18 -0800

James Sparenberg (

james from opencountry.org)

Thought Linux Gazette might like this one. A project called Captive

has taken a wine like approach and combined some features from

ReactOS.... Microsoft Windows ntfs.sys driver and actually getting full

r/w this way.

http://www.jankratochvil.net/project/captive

Is the URL.

James

re: Renaming Ethernet Devices

re: Renaming Ethernet Devices

Thu, 26 Jun 2003 14:36:55 -0700

Ryan White (

ryanw from niuhi.com)

In response to 2 Cent Tip #14 in issue 64 (http://linuxgazette.net/issue64/lg_tips64.html#tips/14) which itself claims to refer back to February 2000 (issue 50). Must be a y2k bug, though, because I couldn't find the more ancient reference myself. The fact is, this hasn't changed any, the tip is just as valid as ever, and more useful now that more people might use multiple ethernet cards to run their house LANs. Enjoy.

-- Heather

After reading your post I found this. I figured it would help someone.

http://www.scyld.com/expert/multicard.html

Anonymous batch FTP -> SFTP

Anonymous batch FTP -> SFTP

Sat, 20 Dec 2003 14:19:58 +0100

Carol Meertens (

c.meertens from geog.uu.nl)

Until recently we had a remote machine doing a nightly FTP-job over anonymous FTP to a local machine. Both machines have ssh2 installed, so we started using sftp instead. Here's how we did it:

On local machine:

- create a normal user sftp

- mkdir /home/sftp/.ssh/

On remote machine:

- su <user-who's-doing-the-nightly-jobs>

- ssh-keygen -t dsa

- give ~/.ssh/id_dsa.pub to admin of local machine

On local machine:

save contents of retrieved id_dsa.pub into /home/sftp/.ssh/authorized_keys

On remote machine:

sftp sftp@local_machine

That's it. To make the sftp-account more restricted, we use scponly (http://www.sublimation.org/scponly).

- close that audio stream

- close that audio stream

25 Jun 2003 10:02:48 -0400

Allan Peda (

pedaa from rockefeller.edu)

Third times a charm

Last night I left my zinf (streaming audio) player running. I felt

bad because doing so wasted bandwidth playing music to a muted

amplifier in an empty room. Here is my bash solution, a la

run-mozilla.sh

[allan@array14 workarea]$ cat ~/bin/run-zinf.sh

#!/bin/sh

# June 25, 2003

# Kills zinf after HR_LIMIT

AUDIO_STREAMER="/opt/bin/zinf"

HR_LIMIT=8

$AUDIO_STREAMER $@ &

echo "killall ${AUDIO_STREAMER}"| at now +${HR_LIMIT} hours

[allan@array14 workarea]$

As a general note, just want to remind folks ... do send in your answers

and tips of all sorts! In case you're wondering to where -- that's

tag@linuxgazette.net. They don't always get published in the month we

receive them, but we do collect them and mix them up a bit. And

sometimes we find strays -- this one had been sent to the editors, not

to the normal tips-and-tag mailbox.

-- Heather

X is Smarter Now

X is Smarter Now

Sat, 7 Jun 2003 15:41:24 -0500

Chris Gianakopoulos (

The LG Answer Gang)

Hello Gang,

I have a NEC MultiSync 77F monitor and a Matrox Millenium II video card. When

running the SuSE configuration program Sax, X configuration occurs sort of

automatically.

All parameters were correct except the modelines associated with my monitor.

I say this because the horizontal centering was incorrect when running X.

I tried modelines generated via the XFree 3.3.6 version of xf86config, and

incorporated the modelines generated from that tool. Those modelines were

proper and usable for XFree86 4.3.0.

As I read on, I saw that X is smart enough to figure out the appropriate

timing without modelines. Thus, I deleted all of the generated modelines, and

now the Modes section looks like this.

Section "Modes"

Identifier "Modes[0]"

EndSection

The file that I edited is:

/etc/X11/XF86Config

I hope that this helps other SuSE 8.2 users.

[Heather]

The flip side of this clue is just as important; if you're on a more

modern setup that doesn't generate modelines because the internally

generated ones will do, but you don't like them and feel they can be

improved, then all the old tuning tricks will still work, as will

modelines found on the net that match your monitor more perfectly.

This page edited and maintained by the Editors of Linux Gazette

HTML script maintained by Heather Stern of Starshine Technical Services, http://www.starshine.org/

Published in Issue 100 of Linux Gazette, March 2004

The Answer Gang

The Answer Gang

The Answer Gang

By Jim Dennis, Ben Okopnik, Dan Wilder, Breen, Chris, and...

(meet the Gang) ...

the Editors of Linux Gazette...

and You!

We have guidelines for asking and answering questions. Linux questions only, please.

We make no guarantees about answers, but you can be anonymous on request.

See also: The Answer Gang's

Knowledge Base

and the LG

Search Engine

Contents:

- ¶: Greetings From Heather Stern

shell and pipe question

shell and pipe question

Font rendering with GTK-2.0

Font rendering with GTK-2.0

Radeon 7500 PCI Card Monitor Autodetect - My Solution

Radeon 7500 PCI Card Monitor Autodetect - My Solution

Updating Libc and Gcc Support on Older Distros?

Updating Libc and Gcc Support on Older Distros?

What are the top five webmail applications of the Open Source world

What are the top five webmail applications of the Open Source world

suppress terminal messages of other processes

suppress terminal messages of other processes

building MP3 playlists

building MP3 playlists

Greetings from Heather Stern

Greetings from Heather Stern

Greetings, folks, and welcome to the world of The Answer Gang. It's

been a fine time getting up to speed in our new digs, but now I'm

indulging in some spring cleaning.

Taking a look at my hard disk I'm way overdue, too. Let me see...

multiple chroot environments being used as development areas, some of

which distros aren't even supported by their own vendors anymore.

Rescue a few bits of actual code trees, then tarball these things off to

a CD, Poof! Hey, that's not too bad. What else can I toast here?

(BTW, has the state of the art in DVD burning gotten anywhere close to

"just buy one at the computer store and Linux will deal with it" quite

yet? I've been too lazy to check.) Some stray PDFs while I was

planning my kitchen remodel... from years ago. I haven't even started

thinking about cycling off backup tarballs yet.

Then there's mail cleanup. I've got quite an email humor collection. There

ought to be lots of dupes in that, but it'll be amusing reading all

through the summer to clear my way past it all, deleting attached pics

that aren't funny, and making a little gallery of ones that are. (There

are oodles of gallery software available on Freshmeat, but I probably

won't make it public; my bandwidth isn't up to that.) While not a

direct relation to how much disk space I'm eating, some serious antispam

principles could at least do spring cleaning on my available time.

Jim's been thinking of instituting greylisting on our mail server.

Now I have to note the term is a mite overloaded. I mean, we've been

talking about whitelists (our good friends) and blacklists (mail never to

be seen again, whether you use the RBL/MAPS servers and their kindred or

not) but grey, what's that? The fact is that just seeing your friend's

raw address isn't nearly enough; viruses steal names out of address

books freely to spoof headers. Clued people like me can add header

checks to spot they are really coming from their expected route or mail

agent ... but those can change and when they do, your friends will be

out of contact. Ouch. Also, mailing lists that are very open (hmm, do

I know any of those? *wink*) are often targeted by spammers who

hope their mail will hit many at once, while their traces are hidden by

standard list-header mangling. The fact is that even the good guys need

a little checking, and anyone who needs to receive mail from

completely unknown people has to do something serious. Separating off

how much checking to really do reduces CPU load for some cases, but that

is where it gets a bit greyer. There's ASK and TMDA for making new folk

at least reply before you'll talk to them. This greylisting practice is

pleasantly sneaky, doing something similar but at the transport layer.

Systems run by real people sending real messages really have to have

mechanisms for trying again if a server's too busy, a little overloaded,

maybe drop back and visit the secondary MX. But spammers don't have

time to waste on all that - they've umpty squadzillions other suckers

to mail. So greylisting responds with a temporary error condition, but

logs who came by; at some vaguely random later point but within popular

and reasonable human timeouts it will accept the mail from that server

knowing it's a repeat call... and the chances that it's someone utterly

normal increase drastically. And even the spammers who follow normal

protocol will be stuck hosting their own stupid mail during the delay,

costing them resources instead of legitimate ISPs. The downside

is not really getting instantaneous notes from your correspondents,

but I'm sure this can be tuned a bit.

Of course, you could always just take the classic techie's option - get

a bigger hard disk. With all that I'm up to in my development and

experimenting, it looks like I'll have to do that. Hah. Watch me

complain, twist my arm

Now I should really figure out about backing up this stuff in a way that

would be easy to restore if I have a problem... um, on something that

doesn't take more space than the original does. Otherwise trying to

clean up the house in general will have us wondering where to put all

this. Gosh, laptop drives are getting better capacities these days.

Much easier than dealing with tapes and discs, a mere 4G per disc

disappears pretty quickly nowadays, worse if you're sticking with CDs.

Now I should really figure out about backing up this stuff in a way that

would be easy to restore if I have a problem... um, on something that

doesn't take more space than the original does. Otherwise trying to

clean up the house in general will have us wondering where to put all

this. Gosh, laptop drives are getting better capacities these days.

Much easier than dealing with tapes and discs, a mere 4G per disc

disappears pretty quickly nowadays, worse if you're sticking with CDs.

I'm about to attend a music convention; my crew's running an Internet

Lounge at Consonance. So all

the older systems in miscellaneous condition are being brought up to

speed and truly dead parts are finally being tossed. Wow. I'm starting

to have shelf space in my hardware cabinet again. That's more like it.

And of course, I've improved the preprocessing scripts I use to match

the new stuff we have going on here. So I'm pleased to reintroduce the

TAG in threaded form. Thomas did nearly all the work marking it up (for

which we can thank his Ruby scripting talents) but the layout tricks are

still mine. Let us know if you find any dust that still needs cleaning

out of 'em. Have a great month, folks.

Readers with good Linux answers of their own, in local mailing

lists or published to netnews groups are encouraged to copy The Answer

Gang their good bits if they're inclined to see their good thoughts

preserved in the Linux Documentation Project, by way of the Linux

Gazette. Ideally the answers explain why things work in a friendly

manner and with some enthusiasm, thus Making Linux Just A Little

More Fun! If they're short and sweet they'll be in Tips, longer

ones may be pubbed here in TAG. But we don't promise that we can

publish everything, just like we don't promise that we can answer

everyone. And last but not least - you can be anonymous if you'd prefer,

just tell us when you write in.

Published in Issue 100 of Linux Gazette, March 2004

News Bytes

By Michael Conry

|

Contents:

|

Selected and formatted by Michael Conry

Submitters, send your News Bytes items in

PLAIN TEXT

format. Other formats may be rejected without reading. You have been

warned! A one- or two-paragraph summary plus URL gets you a better

announcement than an entire press release. Submit items to

bytes@linuxgazette.net

Legislation and More Legislation

Jon Johansen

Jon Johansen

As has been reported in previous months, Jon Johansen, the Norwegian man

charged in relation to the DeCSS computer code, has been successful in his

legal travails. Now, it has

been reported that he is going to attempt to turn the tables

and seek compensation from the Norwegian white collar crimes unit.

PATRIOT Act

PATRIOT Act

EFF is taking a weekly look at the various provisions of the PATRIOT act which

could/should be allowed to lapse in December 2005. The first provision

studied is

Section 215 allowing the FBI access to your private records.

DVD CCA and DeCSS

DVD CCA and DeCSS

The

DVD Copy Control Association (also known as the DVD CCA) has

abandoned its case against Andrew Brunner. Brunner found himself at

the sharp end of legal action as a result of having distributed the DeCSS

computer code on his website. The thrust of the DVD CCA legal action was to

assert that Brunner was a violator of trade secret laws. However, the

legal action taken by the DVD CCA, which was one many cases, proved

unsuccessful in halting the global distribution of the computer code, which

is now anything but secret.

The Electronic Frontier Foundation maintains a

DVD-CCA v. Brunner case archive.

Linux Links

GNUstep Live CD (based on Morphix)

Converting an existing system to

use the 2.6 kernel.

An interesting article by B. D. McCullough

on errors in the statistics

functions of Excel and Gnumeric. The two packages shared some of the same

errors, Gnumeric has been fixed, Excel has not. (courtesy Linux Today).

developerWorks on

the improvements in Linux kernel development from 2.4 to 2.6.

IT Manager's Journal sees

embedded Linux as a disruptive force.

PC World takes a look at Mozilla.org's new Firefox web browser

Six Things First-Time Squid Administrators Should Know

Which Linux distribution is the most popular? Which is growing fastest?

Linux and the distributed chess-brain.

Munich's switch to Linux facing a bumpy road

Tux Cookies?

Tripwire on your Fedora Box

News in General

Nonprofit Open Source Primer

Nonprofit Open Source Primer

NOSI

(Nonprofit Open Source Initiative), has released a primer document for

nonprofit bodies considering the use of open-source alternatives to

closed-source applications.

The PDF document can be

downloaded from their website

Linux

Linux

The

Linux kernel

has been updated to a new stable version: 2.6.3.

Changelog is available.

The old stable line has also received an update, to a

new version: 2.4.25, while the previous stable tree has also seen a

fresh addition in

version 2.2.26.

Firefox

Firefox

The browser formerly known as Firebird (and before that as Phoenix) has now

changed its name to Firefox. Though you may be interested to read the

background FAQ to the name-change, it is probably more useful to look

at the new features included in this release

wxWindows

wxWindows

wxWindows is to change name

to become wxWidgets following pressure from Microsoft regarding

possible trademark infringement of Microsoft's "Windows" name. The

agreement appears to be relatively amicable.

The Battle for Wesnoth

The Battle for Wesnoth

The Battle for Wesnoth is a fantasy turn-based strategy game.

Nicely, it is has been released under the GPL, and can be used on

GNU/Linux, Windows, MacOSX, BeOS, Solaris and FreeBSD. You can read about

the game on the

project website.

Linux Gaming Planet has also posted a review of the game, including an

interview the project originator, David White.

Distro News

Debian

Debian

Why Linux, Why Debian?

Debian Kernel 2.6 HOWTO

Fedora

Fedora

Fedora, as reported by eWeek, appears to be

the first major Linux 2.6 based distribution.

LXer has

a review of the 2.6 based distro online.

Linux From Scratch

Linux From Scratch

The LFS Development Team

has announced the release of LFS-5.1-PRE1, the first pre-release of the

upcoming LFS-5.1 book. You can read it online or you can download the book

from to read it locally at

http://www.linuxfromscratch.org/

This being a test release, the team would appreciate any feedback, in

particular bugs in the installation instructions. Any and all feedback

should be sent to the lfs-dev mailinglist.

Xandros

Xandros

The Register has

reviewed Xandros Linux. "User-friendly to a fault".

Software and Product News

REALbasic 5.5

REALbasic 5.5

REAL Software has released

REALbasic 5.5 Professional Edition. This

software enables developers to compile Visual Basic source code under

Linux.

AP Intelligent Mail SwitchT

AP Intelligent Mail SwitchT

Secluda Technologies

has launched the AP

Intelligent Mail Switch, an SMTP perimeter-gateway solution for e-mail

productivity. With the company's existing product InboxMasterR, the AP

Intelligent Mail Switch gives IT professionals greater ability to monitor

and manage e-mail environments; improve the performance and reliability of

e-mail applications including anti-spam filters, virus scanning, and e-mail

servers; and prevent false positives and other problems caused by e-mail

filters.

Secluda's AP Intelligent Mail Switch runs on SUSE, Red Hat, and Mandrake

Linux, and is priced on a user/server basis starting at $195 up to $3,995

for an unlimited server license.

Mick is LG's News Bytes Editor.

![[Picture]](../gx/2002/tagbio/conry.jpg) Born some time ago in Ireland, Michael is currently working on

a PhD thesis in the Department of Mechanical Engineering, University

College Dublin. The topic of this work is the use of Lamb waves in

nondestructive testing. GNU/Linux has been very useful in this work, and

Michael has a strong interest in applying free software solutions to

other problems in engineering. When his thesis is completed, Michael

plans to take a long walk.

Born some time ago in Ireland, Michael is currently working on

a PhD thesis in the Department of Mechanical Engineering, University

College Dublin. The topic of this work is the use of Lamb waves in

nondestructive testing. GNU/Linux has been very useful in this work, and

Michael has a strong interest in applying free software solutions to

other problems in engineering. When his thesis is completed, Michael

plans to take a long walk.

Fvwm and Session Management

By Thomas Adam

Introduction

With all the hype and attention surrounding desktop managers such as KDE and

Gnome you could be

wondering "why bother using other window managers when those have got

everything included in them?" Integrated file managers, nice shiny gadgets,

etc. The answer is simple. Both KDE and Gnome take up vast amounts of memory,

and if, like me, you have aging hardware, you often look for alternatives that will make

your system usable.

You might think that, as KDE and Gnome have everything the user ever wanted,

why bother changing? Or to put it another way, is there some way that I can

emulate some of what KDE and Gnome do, at less memory cost. The answer is

"yes". One of the most requested features from users over the years has to be

about the use of session managers, which is the focus of this article.

What is session management?

Session management allows the state of applications that are running to be

saved and remembered. This includes attributes such as the size of the

windows, their geometry (location on screen), and which page/desk it was on (if

you're using a virtual window manager which supports those).

It works by the session manager handing out client-IDs. The application to

which this is given to is usually the main window, and any other sub windows

do not get any (these sub windows, we call transient windows), since

they are event-driven specific and only show when such events within the

application are triggered.

However, the parent window has to register itself directly with the session

manager so that the session manager knows the originating window so that any

transient windows that can be attached. Such a window has a property called:

WM_CLIENT_LEADER. This is used to talk to the session manager. A

further property WM_WINDOW_ROLE is used by fvwm to define the state of

the window. These underlying calls come from the X server itself, which

communicates them to the window manager that is running.

So a session manager is a program that handles these protocols,

talking both to the underlying X server and the window manager to determine

how these windows are to be setup. It is the job of the window manager, if

running under a session manager, to communicate with the session manager to

learn of these 'hints'.

There aren't that many true session managers out there. But for those that

do exist, getting them to work with fvwm can be a challenge. I shall look at

each in turn and evaluate their performance.

How does fvwm use Session Management

In order for fvwm to use

session management, it must be compiled with --enable-sm at ./configure time.

Once this has been done, you can use any session manager you like.

When fvwm loads up, without the use of a session manager, it looks for a

defined file, usually: $HOME/.fvwm2rc or:

$HOME/.fvwm/.fvwm2rc

But fvwm, in its configuration file, allows us to define two startup/restart

sections. One for running under a session manager and the other without. As an

example, here is a sample InitFunction for fvwm:

DestroyFunc InitFunction

AddToFunc InitFunction

+ I Module FvwmBanner

+ I xsetroot -solid cyan

+ I Exec xterm

+ I PipeRead 'Wait exec run_parts.rb'

This will load up normally each time fvwm loads without a session manager.

Yet the session manager specific startup looks like this:

DestroyFunc SessionInitFunction

AddToFunc SessionInitFunction

+ I Module FvwmBanner

Thus it allows the user to define separate definitions for instances where

the user may or may not be using a session manager. It should be noted that if

running under a session manager that it will only look for the

SessionInitFunction (and related) sections, and will not run the

InitFunction sections at all.

It is also a bad idea to launch xterms and other applications from within

the session functions since this can often interfere with the way that the

window manager interprets how to handle the window.

In order for us to use the session manager though, we need to ensure that

it is loaded up in the correct order. Whenever one starts X, whether it is

from the command-line (startx) or from a graphical display manager such as

xdm, kdm, gdm, or wdm, a certain file is read: $HOME/.xsession.

Normally, it might look something like this:

#!/bin/bash

program1 &

program2 &

exec fvwm

In order to have the session manager work correctly we have to make sure

that it is the last program that is executed, hence:

#!/bin/bash

program1 &

program2 &

smproxy &

fvwm

exec some_session_manager

Making sure that "some_session_manager" above is replaced by the

actual name of the session manager.

smproxy is required since there are some programs which do not

natively support the program calls that define session management. In such

instances smproxy will try and sniff them out.

xsm -- X Session Manager

This is the original session manager, and is quite limited compared to some

of the other session managers we'll be looking at. To use it with fvwm, is

done exactly as described above. Once everything loads, you should see a

window which looks like the following...

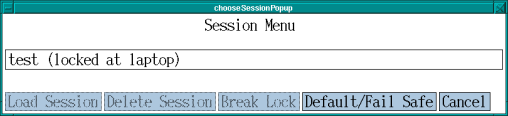

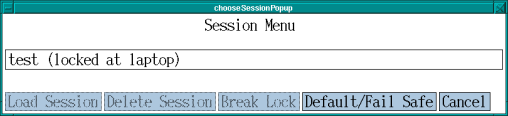

Figure 1: xsm's client window

This is pretty self-explanatory. By clicking on the 'Load Session' button,

you can select previous sessions to load. When you initially start X, this is

what you'll see. You can suppress this window, but to do so, you have to

create a session.

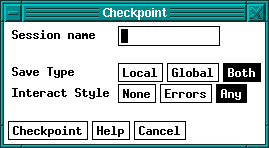

Figure 2: xsm's control window

Figure 2, shows what is presented after everything has loaded. Using this

window, you can get an idea of the applications that it already recognizes,

and save the session etc. The only drawback with using xsm is it is very

limited in the applications it can recognize. If the application is not

strictly X aware then xsm will not be able to handle it.

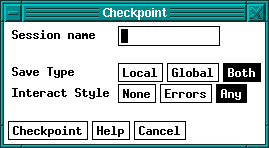

To save the state of you session (and hence to see whether xsm can identify

any more windows, you can press the "checkpoint" button, to get a screen such

as Figure 3.

Figure 3: xsm's checkpoint window

From here you can enter the name of the session that you want to save. I

said earlier that you can by-pass Figure 2, by having it load up the session

name of your choice. Once you have saved the session, edit the file:

$HOME/.xsession and change the line: exec xsm to:

exec xsm -session [name] where '[name]' is the name of the

session.

xsm also causes problems with fvwm in that you have to quit xsm in order to

save the session, since xsm is the governing process. I found this to be quite

annoying. I would however, recommend it to anyone who uses simple apps, or to

someone whom only wants certain apps to run and doesn't want the hassle of

install Gnome or KDE to use the session features that they have.

Gnome-Session

This is the best session manager to use with fvwm. This is because fvwm is

Gnome-compliant and should work with it well (the specifics of the support is

to do with EWMH support). Unlike xsm, gnome-session

handles applications much more efficiently. Under Debian, this can be

installed by the command: apt-get install gnome-session. Just like xsm,

the ~/.xsession file will need modifying, this time to look like

this:

#!/bin/bash

program1 &

program2 &

exec gnome-session

Be advised that starting 'program1' and 'program2' above, before the

session manager will cause two instances of the same program to load each time

you fvwm again since it loads them as normal, and then the session manager

will load them because it would have (hopefully) save their state. That's just

something to bear in mind.

When you login to X this time, initially Gnome will load up -- don't

panic. The pain and suffering won't last for long. What we need to do is

to replace sawfish or metacity (depending on whether you're Gnome1 or Gnome2)

with fvwm, while keeping gnome-session running so that when we save the

session it knows to load fvwm and not some other window manager.

To do that we can try and kick the current window manager out of the way

and have fvwm replace it directly. The command:

fvwm --replace &

...when run in an xterm might do the trick. If not, it will be a case of

interfacing with gnome-session itself. Oddly enough, there is a Gnome

application which provides this very interface:

gnome-session-properties. This is a really useful application for

tweaking the session manager. But for the purposes of getting fvwm running

under it we have to explicitly remove either sawfish or metacity.

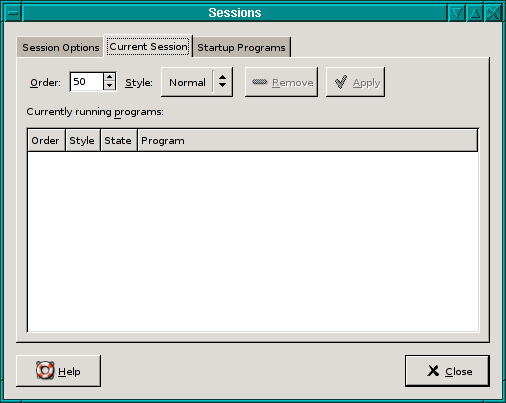

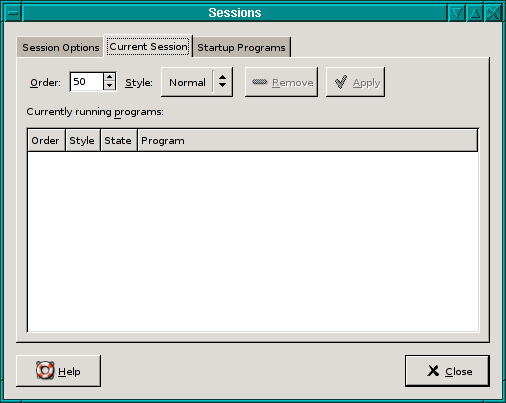

Figure 4: gnome-session-properties

Figure 4, shows (rather blankly) the programs that it knows about and has

loaded. Then all that remains is to kill fvwm that was running previously, by

typing into an xterm:

killall fvwm

Then, going back to the session-properties window, select the window

manager which is running (sawfish or metacity), and clicking on the 'Style'

button set the active state to Normal. You must then click on 'Apply'.

What this has done is to ensure that when the session restarts the window

manager that was previously loaded isn't. Then in an xterm type:

killall [wm] && sleep 5s && fvwm &

Where [wm] above is either: metacity or sawfish. As soon as

that has worked, save the session. It should be pointed out that for those

applications that really aren't session aware, there is an option to have

gnome-session launch applications, by using the 'startup programs' tab (figure

4).

There is a known issue with all session managers (Gnome session

in particular) that causes it to spawn multiple instances of certain programs.

Noticeably with fvwm is xclock. All the information about which programs to

launch, etc, are stored in a file, and is a simple task to fix. This script (written in Ruby) will fix that

abnormality, should it become annoying. To use it simply do the following:

1. copy the script to /usr/local/bin

2. chmod 711 /usr/local/bin/cprocess.rb

3. edit the #! line in the script to point to the ruby binary

4. edit ~/.xsession, and add the following line:

ruby /usr/local/bin/cprocess.rb

before gnome-session loads.

That's really all there is to setting up and using gnome-session with

fvwm.

Conclusion

This has been a very brief look at how different session managers can be

used with fvwm. There are others out there such as KDE's ksmserver and

XFCE4's xfce-session, but I have not tried them with fvwm and do not

know what they are like. Session managers aside, there are also two modules

of interest native to fvwm, namely: FvwmSave and FvwmSaveDesk.

While these are not session managers, they do provide functionality very

similar to them. These will be discussed more fully in other articles next

month.

I write the recently-revived series "The Linux Weekend Mechanic", which was

started by John Fisk (the founder of Linux Gazette) in 1996 and continued

until 1998. I'm also a member of The Answer Gang.

I write the recently-revived series "The Linux Weekend Mechanic", which was

started by John Fisk (the founder of Linux Gazette) in 1996 and continued

until 1998. I'm also a member of The Answer Gang.

I was born in Hammersmith (London UK) in 1983. When I was 13, I moved to

the sleepy, thatched roofed, village of East Chaldon in the county of Dorset.

I am very near the coast (at Lulworth Cove) which is where I used to work.

I first got interested in Linux in 1996 having seen a review of it in a

magazine (Slackware 2.0). I was fed up with the instability that the then-new

operating system Win95 had and so I decided to give it a go.

Slackware 2.0 was great. I have been a massive Linux enthusiast ever

since. I ended up with running SuSE on both my desktop and laptop computers.

While at school (The Purbeck

School, Wareham in Dorset), I was actively involved in setting up two

Linux proxy servers (each running Squid and SquidGuard). I also set up

numerous BASH scripts which allowed web-based filtering to be done via

e-mail, so that when an e-mail was received, the contents of it were added to

the filter file. (Good old BASH -- I love it)

I am now 18 and studying at University (Southampton Institute, UK), on a

course called HND Buisness Information Technology (BIT). So far, it's great.

Other hobbies include reading. I especially enjoy reading plays (Henrik

Ibsen, Chekov, George Bernard Shaw), and I also enjoy literature (Edgar Allan

Poe, Charles Dickens, Jane Austin to name but a few).

I enjoy walking, and often go on holiday to the Lake District, to a place

called Keswick. There are numerous "mountains", of which "Great Gable" is my

most favourite.

I am also a keen musician. I play the piano in my spare time.

I listen to a variety of music. I enjoy listening to

Rock (My favourite band is "Pavement" (lead singer:

Stephen Malkmus). I also have a passion for 1960's

psychedelic music (I hope to purchase a copy of

"Nuggets" reeeeaaall soon).

Help Dex

By Shane Collinge

These images are scaled down to minimize horizontal scrolling.

To see a panel in all its clarity, click on it.

![[cartoon]](misc/collinge/378myduty.jpg)

All HelpDex cartoons are at Shane's web site,

www.shanecollinge.com.

![[BIO]](../gx/2002/note.png) Part computer programmer, part cartoonist, part Mars Bar. At night, he runs

around in a pair of colorful tights fighting criminals. During the day... well,

he just runs around. He eats when he's hungry and sleeps when he's sleepy.

Part computer programmer, part cartoonist, part Mars Bar. At night, he runs

around in a pair of colorful tights fighting criminals. During the day... well,

he just runs around. He eats when he's hungry and sleeps when he's sleepy.

Retrospectives

By Jim Dennis

Introduction

I've been thinking about doing a new column for Linux Gazette for a few

months now. Of looking back to our first issues, reading them with

an Epimethean perspective. (Epimetheus, of Greek Mythology, was brother

to Prometheus --- his counterpart; while Prometheus could see into the

future, Epimetheus had perfect "hindsight").

So that's what this will be. It seems fitting

somehow that we should start the new regular column at issue 100,

reminiscent of the long running column in Scientific American for "50 and 100

Years Ago." Of course such number are completely arbitrary.

In future issues I might cover multiple back issues; or look for threads

that wove their way through a history of discussion.

In most cases I will be looking for things that have changed;

updates that need to be voiced. However, I expect that most of each

issue is still relevant; that only minor retrospective commentary would

be needed.

In this issue we'll be looking back at the very first

issue of Linux Gazette.

Long Live Slackware

John Fisk was trying Slackware 2.0.0, had been using a 2400

baud dial up to get to the 'net via a VAX/VMS account. The first

version of Slackware I used was version 1.0 --- I'd been using the now

forgotten SLS (Soft Landing Systems) and Yggdrasil's "Plug & Play

Linux" before then and had ignored Slackware's pre-releases.

Slackware is still maintained and

is now up to version 9.1 --- and the project is still headed by

Patrick Volkerding.

John's install was only 120Mb.

For comparison, modern Red Hat and

Fedora installations require a

minimum of 250Mb just for the root filesystem! However, a reasonably

minimal Debian can reasonably fit in

well under 120Mb; so we can't complain that Linux as a whole has become

bloated.

Long Live our PPP Connections

He presents a simple PPP "keepalive" shell script. (Could use

the persist directive with a modern pppd

but some still might need something like the shell script)

while [ -e /var/run/ppp0.pid ]; do

ping -c 1 $REMOTE_ROUTER > /dev/null

sleep $DELAY

done

Another approach would be:

ping -i $DELAY $REMOTE_ROUTER 2>&1 > /dev/null &

PINGPID="$!" while [ -e /var/run/ppp0.pid ]; do

sleep $DELAY

done

kill $PINGPID

... which starts one ping process that sends a ping every $DELAY

seconds; then polls slowly on the pid file and, when that's gone it

kills the background task. This is no great technical improvement.

There's minor improvement by not spawning so many ping processes ---

we only load ping once and let it run like a daemon; then kill it when

we're done with it. So this alternative approach is only valuable for

edification --- and example of how to manage a backgrounded task under

the shell.

Long Live Our Changes to /etc/motd and /etc/issue

(And also: Know thy system as thou would know thine own spouse!)

The next item amounts to a 2-cent tip: that Slackware

(among some other distributions) have rcS

scripts (rc.sysinit on Red Hat, Fedora, and

related distributions) that overwrite our /etc/motd and/or

/etc/issue files. So you have to comment out that code if you

want your changes to these files to persist.

My 5-penny tip over the top of that is that every Linux system

administrator should read their /etc/inittab file from

top to bottom and recursively follow through them by applying the following

procedure:

- For every filename you encounter:

- Run the file command on it

- If it's binary:

- Read the man or info pages

- Use any package manager to find out which package "owns" this program (dpkg -S

or rpm -qf)

- Peruse any other docs associated with that package

- If it's a script of any sort:

- Open it up in a text editor/viewer

- Recurse

Following this procedure, you will wend your way through your entire

system start-up sequence. You will know almost EVERYTHING about how your

system boots up. (This ignores the possibility that you might have an

initrd --- and initial RAMdisk, with a /linuxrc

script or binary embedded in it).

As part of my LPI classes I teach this procedure and recommend that all

students do this for each new distribution that they ever try to manage.

Twiddle Dee Dum: Home at Last

In the next article we see our first 2-cent tip. ~ (the

"tilde" or "twiddle" character) is expanded by the shell to the current

user's home directory. I'd add that ~foo will look up the home

directory for the user "foo" and expand into that path. This notion

of "look up" can actually be quite involved on a Linux system ---

though it usually just means a search through the /etc/passwd file.

On other systems it would entail NIS, LDAP, Hesiod, or even MS

Windows "Domain" or "Active Directory" operations. It all depends

on the contents of the /etc/nsswitch.conf and the various

/lib/libnss* libraries that might be installed.

Shell Tips, Tricks, Aliases and Custom Functions

The aliases he lists are all still valid. I might add another

tip to point to Ian MacDonald's bash

programmable completion project which is now shipped as examples with

the GNU bash sources; and is thus installed on many Linux distributions

by default. To use them, simply "source" the appropriate file, as

described in Ian's article under "Getting Started." Ian's article has

many other tips and tricks for bash and for the readline libraries against

which it's linked. (On my Debian systems Ian's bash completions are in

/etc/bash_completion).

In his next article John also

talks about bash custom functions. A nitpick and CAVEAT is appropriate

here. The version of bash that was in common use back then would accept

all those shell functions as he typed them. However, newer versions of

bash 2.x and later, require that we render them slightly differently:

# Now, some handy functions...

tarc () { tar -cvzf $1.tar.gz $1 ; }

tart () { tar -tvzf $1 | less ; }

tarx () { tar -xvzf $1 $2 $3 $4 $5 $6 ; }

popmail () { popclient -3 -v -u myname -p mypassword -o /root/mail/mail-in any.where.edu ; }

zless () { zcat $* | less ; }

z () { zcat $* | less ; }

... all we've done is insert semicolons before those closing

braces. This is required in newer versions of bash because it was

technically a parsing bug in older versions to treat the closing

brace as a separator/token. We could also have simply inserted

linebreaks before the closing braces. (To understand this consider

the ambiguity caused by: 'echo }' --- historically the } did not need

to be quoted like a ')' would be. if that doesn't enlighten you just

accept it as a quirk and realize that these old examples from 1995

must be updated slightly to run on newer versions of bash).

Zounds!!! Zany Sounds

In the next article the old links to sunsite.unc.edu are ancient and

obsolete. The sounds to which he was referring can still be found at:

... which is has all the old sunsite.unc.edu

contents after two name changes (it was metalab.unc.edu

for awhile, too).

Alas I couldn't find the "Three

Stooges" sounds at the ibiblio site; but a quick Google

search suggests that we can get some audible zaniness at:

/etc/fstab for Filesystem Aliases

His article on /etc/fstab entries, particularly for

"noauto" devices like CD-ROMs and floppies is still relevant.

Essentially nothing as changed about that. Some new distributions

have programs like magicdev which run while

you're logged into the GNOME or other GUI, polling the CD-ROM drive

to automatically mount any disc you insert (and to detect the type

and optionally dispatch music players for audio CDs or launch file

browsers for file CDs, etc). Personally I detest these automount

features and disable them as soon as I can find the relevant GUI control

panel.

I'd still consider this to be a 2-cent tip of sorts.

Long Live Backups and Version Control!

For the next article (about making serialized backups of files before

editing them) I'd simply suggest using RCS or CVS. RCS is installed

with most Linux distributions. To use it, simply create an RCS directory

under any directory in which you wish to maintain some files in version

control. Then every time you're about to edit a config file therein,

use the command: ci -l $FILENAME;

the file will be "checked in" to the RCS directory. This will

automatically track each set of changes. You can always use the

rcsdiff command to view the differences between the current version

and the most recent --- or between any arbitrary version by using

the appropriate -r switches. CVS is basically similar, but allows

you to maintain a centralized repository across the network ---

optionally using securely authenticated and encrypted ssh tunnels.

The advantage of tracking your files under CVS is that you can restore

your settings and customizations even after you've completely wiped

the local system (so long as you've maintained your CVS repository

host and its backups).

Tutorials for CVS and RCS:

... there are several others on the web; Google for "RCS tutorial" or "CVS tutorial".

I'd also suggest that some of us look at the newly maturing Subversion at:

... which just attained the vaunted release version 1.0

this month.

In the next article: "Accessing Linux from DOS" I notice

that the old link for the LDP still works (graciously redirecting

us from the old sunsite.unc.edu/mdw/ URL to the current ibiblio

LDP mirror). Historical note: MDW are Matt Welsh's initials! Of

course the current canonical location for the LDP (Linux Documentation Project) is now:

As for the EXT2TOOL.ZIP link, it's dead. However, a quick perusal

of Freshmeat suggests that anyone who needs to access ext2/ext3

filesystems from an MS-DOS prompt might want to use Werner Zimmermann's

LTOOOLS package (

also at: http://www.it.fht-esslingen.de/~zimmerma/software/ltools.html

Professor Zimmermann's home page). LTOOLS is the MS-DOS counterpart

to the mtools package for Linux.

Apparently the LTOOLS package includes a "web interface" (one

utility in the package and serve as a miniature web server for

the MS Windows "localhost") and it include a Java GUI as well.

LTOOLS allegedly still supports MS-DOS, but also have features

for later Microsoft operating systems like '95/'98, NT, ME, XP,

and Win 2000. It's also apparently portable to Solaris and other

versions of UNIX (So you can access your ext2 filesystems from

those as well).

mtools allows Linux users to access MS-DOS

filesystems, mostly floppies, but also hard drive partitions, using

commands like: mcopy a:foo.zip .

or mtype b:foo.txt or just mdir (defaults to the A: drive, /dev/fd0

on most installations). mtools has been included with mainstream Linux

distributions for most of the last decade, and has been available and

widely used on other versions of UNIX for most of that time as well.

However, when I'm teaching my LPI courses I still find that its new to

more than half of the sysadmins I teach. The principle advantages of

mtools are: you don't have to mount and unmount the floppies; you can

mark it SGID group "floppy" and set the privileged=1 flag in

/etc/mtools.conf to allow users to access MS-DOS filesystems

on floppies from their own accounts (without sudo etc).

The last article in this premier issue was one on building a Linux kernel.

The basic steps outline there have remained true for the last eight

years. Today we use bzImage instead of the old zImage make target.

Also, I usually use:

make menuconfig; make dep

make clean bzImage modules modules_install install

... and now, with the recent release of the 2.6 kernels we'll be

dispensing with the "make dep" step (which was used to make or modify

sub-Makefiles among other things). Also the new 2.6 kernel builds

are, by default, very quiet and clean. Try one to see what I

mean!

Another minor change: newer kernels can no longer be booted raw

from floppies. As of 2.6 Linux always requires some sort of boot loader

(SYSLINUX, GRUB, LILO, LOADLIN.EXE, etc). The rdev

command is basically useless on modern kernels (though one might still

use its other features to patch in a default video mode, for example).

This isn't a major issue as almost everyone has almost always used

a bootloader through the history of Linux; the ability to pass kernel

command line parameters is just too bloody indispensable! Of course Linux

kernels on other architectures such as SPARC or PowerPC

have their own formats and bootloaders.

Conclusion

Overall most of the content of this old issue, almost nine years ago,

is still usable today. In less than 3 pages I've summarized it and

pointed out the few things that have to be considered when using this

information on modern systems, updated a few obsolete URLs, and just

pointed to some newer stuff in general.

That doesn't surprise me.

Linux follows the UNIX heritage. Things that people learned about UNIX 30

years ago are still relevant and useful today. Things we learned about

Linux 10 years ago are now relevant on new Mac OS X systems. The legacy

of UNIX has spanned over half of the history of electronic computing.

Jim is a Senior Contributing Editor for Linux Gazette, and the

founder of The Answer Guy column (the precursor to The Answer Gang).

![[BIO]](../gx/2002/note.png) Jim has been using Linux since kernel version 0.97 or so. His first

distribution was

SLS (Soft Landing Systems). Jim taught

himself Linux while working on the technical support queues at

Symantec's Peter Norton Group.

He started by lurking alt.os.minix and alt.os.linux on USENET

netnews (before the creation of the comp.os.linux.* newsgroups), reading them

just about all day while supporting Norton Utilities, and

for a few hours every night while waiting for the rush-hour traffic to subside.

Jim has been using Linux since kernel version 0.97 or so. His first

distribution was

SLS (Soft Landing Systems). Jim taught

himself Linux while working on the technical support queues at

Symantec's Peter Norton Group.

He started by lurking alt.os.minix and alt.os.linux on USENET

netnews (before the creation of the comp.os.linux.* newsgroups), reading them

just about all day while supporting Norton Utilities, and

for a few hours every night while waiting for the rush-hour traffic to subside.

Jim has also worked in other computer roles, and also as an electrician and

a crane truck operator. Jim has also worked in many other roles. He's been a

graveyard dishwasher, a janitor, and a driver of school buses, taxis, pizza

delivery cars, and even did some cross-country, long-haul work.

He grew up in Chicago and has lived in the inner city, the suburbs,

and on farms in the midwest. In his early teens he lived in Oregon--

Portland, Clackamas, and the forests along

the coast (Brighton). In his early twenties, he moved to

the Los Angeles area "for a summer job" (working for his father, and learning

the contruction trades).

By then, Jim met his true love, Heather, at a

science-fiction convention. About a year later they started

spending time together, and they've now been living together for

over a decade. First they lived in Eugene, Oregon, for a year, but now they

live in the Silicon Valley.

Jim and Heather still go to SF cons together.

Jim has continued to be hooked on USENET and technical mailing

lists. In 1995 he registered the starshine.org domain as a birthday gift to

Heather (after her nickname and favorite Runequest persona). He's participated

in an ever changing array of lists and newsgroups.

In 1999 Jim started a book-authoring project (which he completed

after attracting a couple of co-authors). That book Linux System

Administration (published 2000, New Riders Associates) is not

a rehash of HOWTOs and man pages. It's intended to give a high-level

view of systems administration, covering topics like

Requirements Analysis, Recovery Planning, and Capacity Planning.

His book intended to build upon the works of Aeleen Frisch

(Essential Systems Administration, O-Reilly & Associates) and

Nemeth, et al (Unix System Administrator's Handbook, Prentice

Hall).

Jim is an active member of a number of Linux and UNIX users' groups

and has done Linux consulting and training for a number of companies

(Linuxcare) and customers (US Postal Service). He's also presented

technical sessions at conferences (Linux World Expo, San Jose and

New York).

A few years ago, he volunteered to help with misguided technical

question that were e-mailed to the editorial staff at the Linux

Gazette. He answered 13 questions the first month. A couple

months later, he realized that these questions and his responses had

become a regular column in the Gazette.

"Darn, that made me pay more attention to what I was saying! But I

did decide to affect a deliberately curmudgeonly attitude; I didn't

want to sound like the corporate tech support 'weenie' that I was

so experienced at playing. That's not what Linux was about!"

(

curmudgeon means a crusty, ill-tempered, and usually old man,

according to the

Merriam-Webster OnLine dictionary.

The word hails back to 1577, origin unknown, and originally meant miser.)

Eventually, Heather got involved and took over formatting the column,

and maintaining a script that translates "Jim's e-mail markup hints"

into HTML. Since then, Jim and Heather have (finally) invited other

generous souls to join them as The Answer Gang.

Ecol

By Javier Malonda

The Ecol comic strip is written for escomposlinux.org (ECOL), the web site that

supports es.comp.os.linux, the Spanish USENET newsgroup for Linux. The